85% of contact center leaders say their organization is prepared to implement AI. When researchers dug a little deeper and asked whether they felt fully prepared to execute at scale, that number dropped to 34%.

That’s not a small gap. That’s more than half of every leader who thinks they’re ready, who isn’t.

And the uncomfortable part? Most of them don’t know it yet.

If your organization has rolled out some form of AI in the last couple of years, there’s a reasonable chance you’re somewhere in that middle group. Not ignoring AI. Not behind on it. Just not actually getting much out of it, and not entirely sure why.

This isn’t a piece about why you should use AI. You already know that. It’s about how to tell whether what you’ve got is actually working, or whether you’ve accidentally joined the majority of contact centers who bought something, called it AI, and moved on.

How “Having AI” Became a Checkbox

It happened quickly and quietly. AI went from being a future consideration to a present expectation, and a lot of organizations responded the way organizations usually respond to fast-moving pressure: they did something visible.

They bought a chatbot. They added AI to a vendor contract. They ran a pilot on one team. They sat through a product demo that showed a beautiful dashboard and clicked “yes” on the upsell.

None of that is wrong. But none of it is execution either.

The challenge is that AI in a contact center isn’t a switch you flip. It’s a system you build. And when you skip the building part, you end up with something that technically exists but practically doesn’t do much. Your team works around it. Your metrics don’t move. And six months later, when someone asks how the AI rollout is going, the honest answer is “fine” because nobody wants to admit it’s not really doing anything.

McKinsey’s research backs this up: while AI usage is widespread, nearly two-thirds of organizations are still stuck in pilots. And it shows up in the infrastructure too. The average contact center now manages 3.9 different technology vendors, with only 3% operating on a single unified platform. Fragmented tools mean fragmented data, and fragmented data is exactly why AI can’t deliver what it promised.

This is what researchers mean when they talk about the gap between AI ambition and AI delivery. It’s not that people don’t want results. It’s that buying the tool and embedding it into how your contact center actually operates are two very different things, and most organizations have only done the first one.

Three Signs You’re in the Fake Progress Trap

1. Your AI handles one thing when it could handle five

The most common version of this: a chatbot that answers FAQs while your agents still handle everything else manually. Or speech analytics that’s turned on but nobody reviews. Or intelligent routing that was configured during implementation and hasn’t been touched since.

AI tools in contact centers are almost always capable of more than they’re being used for. If yours is sitting in a corner doing one job while your team grinds through everything else the same way they always have, you haven’t implemented AI. You’ve implemented a feature.

2. Your AI results live in a report nobody reads

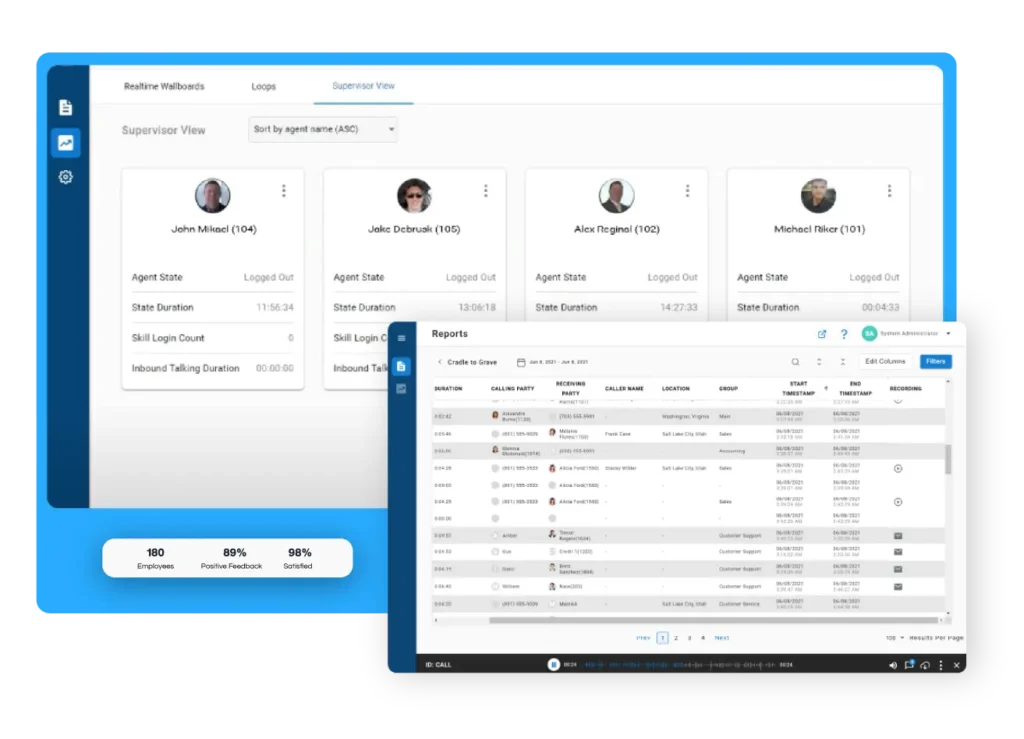

If the only place your AI is showing up is in a monthly summary that gets glanced at and filed away, it’s not changing how your contact center operates. Real AI integration shows up in real-time: in the queue, in the coaching conversation, in the moment a supervisor catches a problem before it becomes a complaint.

Data that arrives after the fact doesn’t help the agent who struggled on a call three weeks ago. Insight has to be timely to be useful.

3. Your team doesn’t trust it

This one is easy to miss because agents won’t always say it directly. But watch how they actually work. Do they wait for the AI suggestion and act on it, or do they click past it? Do they use the knowledge base the chatbot pulls from, or do they have their own workarounds? Do supervisors use the automated QA scores, or do they still pull random call samples the old way?

When your team has quietly decided the AI isn’t reliable, they work around it. And a tool people work around isn’t a tool that’s working.

What Real AI Execution Actually Looks Like

The contact centers that are in the 34%, the ones where AI is genuinely delivering, tend to have a few things in common.

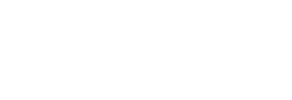

Their AI is connected to the whole workflow, not bolted onto the side of it. Routing decisions, coaching feedback, QA scoring, and real-time alerts are all running through the same system rather than sitting in separate tools that don’t talk to each other.

Their managers use AI data during the shift, not just after it. Supervisors can see what’s happening on the floor right now: which calls are running long, where sentiment is dropping, which agent might need a whisper in their ear before a situation escalates. That real-time visibility is what turns AI from a reporting tool into an operational one.

And critically, their agents actually trust it. That trust doesn’t come automatically. It comes from configuration that reflects how your specific contact center works, not just factory settings. It comes from training that helps agents understand what the AI is doing and why. And it comes from managers who use AI feedback as a coaching tool rather than a performance management hammer.

That last one matters more than most people realize. If your team thinks AI is watching them to catch mistakes, they’ll resist it. If they think it’s helping them handle hard calls better, they’ll use it.

Three Questions to Ask Yourself Right Now

If you’re not sure which side of the gap you’re on, start here.

Can you name a specific operational outcome that changed because of your AI, in the last 90 days?

Not a feature you turned on. An outcome. Shorter handle times, fewer escalations, better first-contact resolution, improved QA scores. If you can’t name one, your AI isn’t embedded yet.

Do your agents and supervisors bring up the AI tools without being prompted?

If the answer is yes, even occasionally, that’s a signal they’re finding it genuinely useful. If it never comes up unless someone from leadership mentions it, that’s worth paying attention to.

Is your AI connected to your actual performance data, or is it running separately?

If your AI analytics live in a different system from your core reporting, your team is doing double the work to find half the insight. Integration isn’t optional for real execution.

What Getting It Right Looks Like in Practice

Essential Credit Union came to Xima with a contact center that was working, but not well enough. Service levels were landing between 50% and 70%. Call handling was inconsistent. Supervisors didn’t have the real-time visibility they needed to coach effectively.

After implementing Xima’s AI-powered CCaaS platform, service levels climbed to a consistent 90%. That shift didn’t happen because they turned on a new tool and left it alone. It happened because the AI was connected to how calls were actually being routed, how agents were being coached, and how supervisors were tracking performance in real time.

That’s the difference between AI as a feature and AI as part of how your contact center runs.

The Bottom Line

The gap between 85% and 34% isn’t a technology problem. It’s an implementation problem. And it’s one that’s easy to miss because the early stages of fake progress feel a lot like real progress. You have the tool. You have the contract. You sat through the training. The box is checked.

According to research covered by CX Today, the most common barriers aren’t budget or technology. They’re lack of internal AI expertise, data privacy concerns, and integration complexity. In other words, the things that come after you buy the tool.

But your handle times haven’t moved. Your agents are still doing things manually that the platform is supposed to handle. And somewhere in the back of your mind, you’ve been wondering whether you got the ROI you were promised.

If that sounds familiar, the answer isn’t to buy something new. It’s to actually use what you have. And if what you have isn’t built to be embedded, configured, and connected to how your team really works, that’s a different conversation worth having.

See how Xima’s AI-powered platform works in practice.